Agency7's full architectural guide — from AI lead generation to autonomous financial operations.

Building an AI Voice Transcription Feature — Lessons That Still Matter in 2026

In September 2024, I shipped a voice transcription feature into Letterbird — a quick-note app where users record audio and have it transcribed in real time via OpenAI's Whisper API. I filmed the whole build as a day-in-the-life so other developers could see the messy middle: the debugging, the wrong turns, the "why is this API route returning 401?" moments.

Eighteen months later, voice AI is no longer a feature you bolt onto a side project. At Agency7, we now ship full AI voice agents for Edmonton businesses — agents that answer calls, book appointments, qualify leads, and hand off to a human when they should. The Letterbird transcription project turned out to be the foundation. This post revisits what I learned building it and maps those lessons onto what voice AI actually costs, breaks, and delivers in 2026.

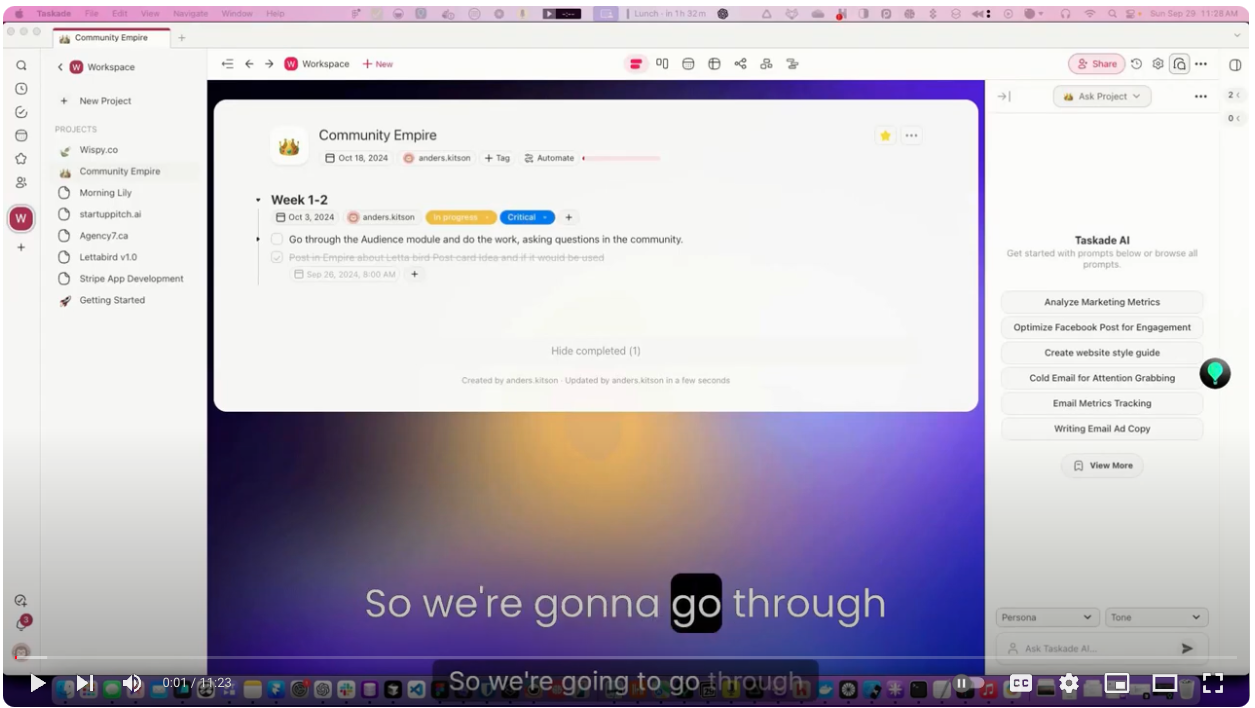

👉 Watch the original 2024 build video

What the 2024 build actually involved

The project had a simple premise: press a button, record audio in the browser (or on iOS), POST the file to a Next.js API route, call Whisper, return the transcript. Three moving parts, each with its own failure mode.

- Browser audio capture via the MediaRecorder API, encoding to

audio/webmon desktop andaudio/mp4on Safari. - API route that streamed the file to OpenAI's

whisper-1endpoint and returned the text. - UI state tracking "recording / uploading / transcribing / done / error" — boring but non-negotiable.

The painful parts weren't the AI. They were the seams between pieces: iOS Safari silently dropping audio when the tab lost focus, Next.js file-size limits tripping on longer recordings, and WebM files that played fine in the browser but that Whisper couldn't parse because the duration metadata was missing. Each of those took a day.

What I got right — and what I'd change now

Got right:

- Ship an end-to-end vertical slice before polishing anything. I had a janky transcript in the UI on day one. That let me fix real bugs instead of imaginary ones.

- Whisper as the model. Even now, Whisper's cost-accuracy point is hard to beat for asynchronous transcription (recorded audio, not live streams).

- Keep the backend boring. A single Next.js API route with no queue, no workers. Fine for a recording-based app with 30-second typical clips.

Would change:

- I'd skip MediaRecorder for anything serious. For 2026 production voice work, we use WebRTC-native stacks (LiveKit, Pipecat) that handle iOS backgrounding, silence detection, and codec negotiation out of the box.

- I'd instrument from day one. No error monitoring, no latency traces, no cost tracking per request. When a transcription silently failed, I had to repro it by hand. Today every voice-AI build ships with Sentry + OpenTelemetry on the audio path from minute one.

- I'd not hand-roll the UI state machine. XState or a small reducer would have saved two hours of "why did it flip from uploading to idle?" The UI bugs were worse than the API bugs.

From transcription to real-time voice agents

Transcription is the batch version of voice AI: record, send, wait, display. A voice agent is the streaming version: the caller is still speaking, and the agent needs to understand, reason, and respond in under 700 milliseconds or the conversation feels dead. That's the whole game in 2026.

The Letterbird transcription work taught me the substrate — file formats, codecs, API error handling, retry logic, cost-per-minute math — but voice agents add:

- Streaming ASR (automatic speech recognition) that returns interim results every 100-200ms, not a finished transcript

- VAD (voice activity detection) to know when the caller has stopped talking

- Barge-in handling so the agent stops mid-sentence when the caller interrupts

- Tool calling so the agent can look up availability, write to a CRM, or route the call

- TTS (text-to-speech) that sounds human — this is where ElevenLabs earns its fee

Every one of those is a rabbit hole. The 2024 "record and transcribe" flow is now roughly 5% of a production voice-agent build.

What a 2026 voice agent actually costs to build

Rough numbers from Agency7 engagements with Edmonton service businesses:

- Setup & configuration: $3,000-$6,000 depending on CRM integration complexity

- Monthly retainer (tuning, monitoring, prompt updates): $200-$500/month

- Per-minute runtime cost (ASR + LLM + TTS + telephony): $0.15-$0.30 per minute of talk time

- Typical payback window for a clinic or trade: 2-4 months, driven mostly by after-hours call capture

If you want the longer breakdown, we wrote it up here: AI Voice Agents for Edmonton Trades vs an Answering Service.

Lessons that transferred directly from Letterbird to client work

- The AI is the easy part. OpenAI, Anthropic, ElevenLabs, Deepgram — all rock-solid. The hard part is everything around the model: auth, file handling, error recovery, cost control, idempotency.

- Instrument the boundary calls. Every external API call gets a trace. Latency budgets matter more than model quality once you're live.

- Test on the actual device, not the emulator. iOS Safari will surprise you. Chrome on Windows will surprise you. Android's AudioRecord will surprise you.

- Write down your codec assumptions.

audio/webm;codecs=opusvs.audio/mp4is the single most common source of silent bugs in browser audio. - Cost-per-user should be in your head from day one. Voice AI that looks free at 10 users can bankrupt you at 1,000.

Frequently asked questions

Is OpenAI Whisper still the best transcription model in 2026?

For asynchronous transcription of recorded audio in English, Whisper (v3 and its hosted variants) is still the default cost-accuracy pick. For live streaming voice agents, Deepgram Nova-3 and AssemblyAI Universal-2 typically beat Whisper on latency and real-time diarization.

Can a small Edmonton business run its own Whisper transcription?

Yes, but you usually shouldn't. Running Whisper locally requires GPU infrastructure, scaling logic, and monitoring. For under $0.006/minute you can hit OpenAI's hosted endpoint and skip the entire ops burden. Self-hosting only makes sense when you have strict data-residency requirements (e.g., healthcare records that can't leave Canada).

How is a voice agent different from transcription?

Transcription is one-way: audio goes in, text comes out. A voice agent is a two-way conversation — it listens, thinks, speaks, remembers context across turns, and takes actions (booking appointments, looking up information, routing calls). Transcription is a component of a voice agent, not the whole thing.

What's the minimum latency for a voice agent to feel natural?

End-to-end (caller finishes speaking → agent starts responding) should be under 800ms. Under 500ms feels snappy. Over 1.2 seconds feels robotic and callers start repeating themselves. Hitting under 800ms consistently requires streaming ASR, a fast LLM like GPT-4o-mini or Claude Haiku 4.5, and a low-latency TTS like ElevenLabs Turbo.

Can I use the same stack for English and Spanish callers in Edmonton?

Yes. Whisper, Deepgram, and ElevenLabs all handle English + Spanish natively. For Edmonton's Cantonese, Mandarin, Punjabi, and Tagalog-speaking customer bases, model quality is good but not as polished — we typically pair a multilingual ASR with an English-first LLM and explicit language-detection routing.

How long does it take to ship a production voice agent?

A focused build for a single workflow (e.g., dental appointment booking, HVAC after-hours triage) is typically 2-4 weeks. Multi-workflow agents with deep CRM integration (HubSpot, ServiceTitan, Jobber) take 4-8 weeks. The long pole is almost always the CRM API integration, not the AI.

Where the 2024 project sits now

Letterbird's transcription feature still works. The Whisper call pattern I shipped 18 months ago is the same pattern we use for every dashboard-style transcription tool. What's changed is the ambition — we're no longer recording audio and waiting. We're having full conversations with callers, on behalf of Edmonton businesses, at 3 a.m.

If you're an Edmonton business owner wondering whether voice AI is ready for your operation, the answer is usually yes — but the framing matters. Don't ask "can it transcribe?" Ask "can it take the call I'm losing at 6:37 p.m. on a Tuesday?" That's the question we build for.

Curious what your business's voice agent would look like? Book a free conversation at consult.agency7.ca/ai-audit or see what we offer on the AI Voice Agents page.

Get the Autonomous Enterprise Blueprint

A 14-page architectural guide covering the Agency7 mandate, the fractured pipeline, agentic ledgers, and the generative engine optimization playbook — delivered as a PDF to your inbox.